Ethical AI Explained: What Every User Should Understand

Currently, artificial intelligence has moved beyond the realm of science fiction to become a significant and undeniable component of our daily lives. Whether you are scrolling through your favorite social networking media, asking Siri for the weather on your Apple device, or consulting Cortana on a Windows computer, AI is there. It is even deeply embedded in complex healthcare systems that help doctors make life-saving decisions. No matter which applications of AI we discuss, they all share a common thread: they tend to influence the decisions we make every single day. This influence is only set to grow as more applications of AI emerge on a daily basis, making it more important than ever to understand the ethical framework that governs these powerful tools.

What is Ethical AI?

In essence, the question of responsible AI is inextricably linked with how we create, use, and apply these systems in accordance with specific values and social principles. At its heart, ethical AI is about using and developing technology in a manner that is fair, transparent, and safe. Because AI is now involved in so many sensitive areas—from job hiring to healthcare—it is necessary for the general public to have a firm comprehension of what makes a system ethical.

When AI systems are developed without a dedicated thought for ethics, they can unfortunately become discriminatory, perpetuating existing social inequalities or even invading personal privacy. To prevent this, ethical AI requires that we treat all people fairly without discrimination and respect the sensitivity of their personal data. It also demands that communication regarding how a decision was reached is carried out in a clear, understandable manner. Perhaps most importantly, it means that those who create and deploy these systems must carry full responsibility for their behavior and the real-world consequences they produce.

Why Ethical AI Matters to Everyone

One of the major misconceptions that many people hold about artificial intelligence is the belief that it is a purely objective technology. There is a common assumption that because a machine is processing data, the result must be free from human prejudice. However, this is simply not true. AI is a direct reflection of the data that it is exposed to during its training phase. If that data contains biases, the AI will learn and amplify them.

We can see the real-world implications of this in several critical sectors. In hiring solutions, for instance, some AI tools have been found to discriminate against certain demographics based on historical data that favored one group over another. In healthcare, if the training data for a diagnostic AI is too small or lacks diversity, the system often makes mistakes in its diagnosis, potentially leading to poor patient outcomes. Even our social media feeds are affected; algorithms designed to offer recommendations can unintentionally disseminate misinformation or promote polarized content if they aren't built with ethical guardrails. Because we use these systems daily as both employees and consumers, understanding AI ethics is relevant to every one of us.

The Principles of Well-Developed Ethics

To control the risks involved with the application of AI technology, certain guidelines have been established for developing ethical systems. These principles provide a framework for both developers and users to ensure that technology serves the public good.

1. Fairness and Non-Discrimination

The decisions made by an AI system should never be discriminatory. A classic example of this can be found in the financial sector. If a loan-approving AI begins to favor certain zip codes over others, it creates an unjust environment based on geography rather than financial merit. An unbiased or righteous AI is responsible for avoiding such pitfalls. As a user, a helpful tip is to always ask about the process by which decisions are reached for you. Companies that are clear about their work will be able to explain what their models are doing and what methods they use to reduce bias.

2. Transparency

It is vital for users to understand the processes involved when an AI system arrives at a decision. This is often referred to as "explainability." For instance, Google’s AI-powered medical imaging solution provides explanations for its proposed diagnoses, which helps doctors understand the logic behind the predictions rather than just following them blindly. You should always seek out systems that explain their AI recommendations clearly, as a lack of clarity is often a red alert for irresponsible AI development.

3. Accountability

The responsibility levels of an AI must be clearly defined from the start. Consider the case of a self-driving vehicle. If a critical error occurs, it must be possible for the car’s manufacturer, the software creators, or the relevant authorities to investigate exactly what went wrong. As a user, you should try to understand who is liable when you use AI systems, especially in high-stakes applications like finance or biomedicine.

4. Privacy and Data Protection

Ethical AI is deeply concerned with data privacy and adheres to strict protection principles. A great example of this is how Apple handles Siri data. By using local AI processing, Apple limits the amount of data shared with a central server, thereby protecting the privacy of the user. When choosing AI solutions, it is wise to examine privacy policies and opt for services that either process data locally or use techniques like masking to protect your personal identity.

5. Safety and Security

AI systems must be resistant to manipulation and abuse. While it is true that AI-powered cybersecurity systems can detect and prevent phishing attacks in real-time, those same systems could be utilized for fraudulent activities if they end up in the wrong hands. This is why it is so important to develop cybersecurity systems specifically designed to protect individuals from AI-driven threats. Always try to use technology developed by recognized institutions and stay updated on any reported vulnerabilities or breaches.

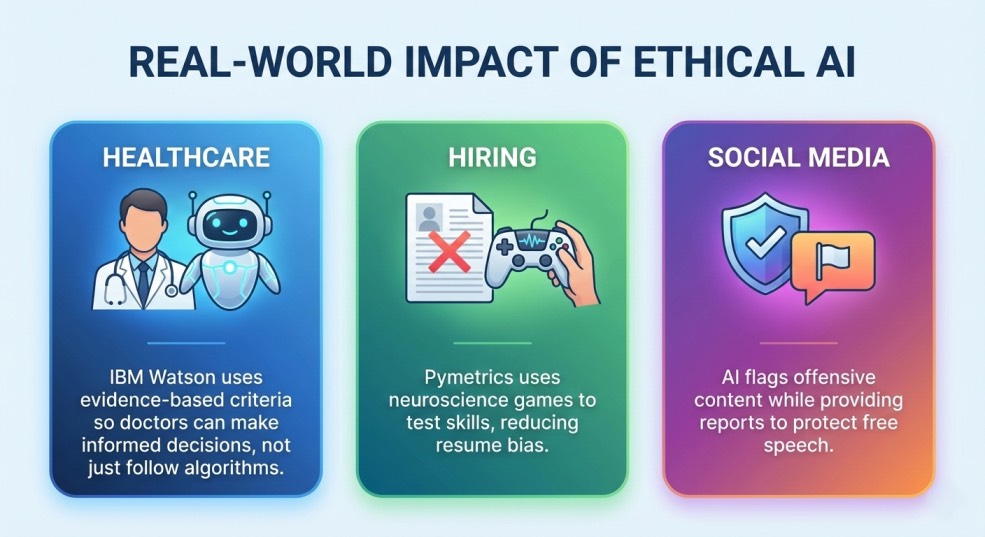

Real-World Applications of Ethics in AI

We can see these ethical principles in action through various tools and platforms. In healthcare, IBM Watson Health uses specific evaluative criteria to guide its AI recommendations. This ensures that the advice provided to medical professionals is evidence-based and explicable. The impact is significant: it allows doctors to examine the suggestions of the AI so they can make informed decisions rather than simply trusting a "black box" system.

In the world of recruitment, a firm called Pymetrics uses neuroscience games to test candidates. By focusing on skills and personality traits rather than just the traditional resume, they help overcome the limitations and historical biases of human-written resumes. This ensures that job seekers are judged fairly, providing a more equal opportunity for selection.

Even social media moderation is seeing a shift toward ethics. Microsoft’s AI tools for social media are designed to identify offensive material while simultaneously providing transparency reports. This helps users understand why certain content was flagged, which in turn promotes a better balance between community safety and freedom of speech.

Navigating the Challenges of AI Systems

While the benefits of AI are vast, well-intentioned systems can sometimes generate unintended consequences. One of the most persistent issues is bias and discrimination. Because AI models reflect the data they are given, discriminatory data inevitably leads to discriminatory practices. As an actionable tip, when you are dealing with AI-informed decisions, try to spot patterns that look unfair. Interestingly, decisions that inform about the number of "passes" related to an audit tend to be more reliable indicators of fairness.

Another challenge is the lack of explainability. Some deep learning models are essentially "black boxes," where the decision-making process is so complex that it isn't even understandable to the people who built it. In fields like finance, health, or law, you should always prefer solutions that provide "why" indicators for their results.

Furthermore, a blind trust in AI can be detrimental. It is significant for human beings to verify the information provided by these systems. You should leverage AI for support and efficiency, but not for final decision-making; always manually review critical information. Additionally, be mindful of privacy. AI often needs access to considerable amounts of personal data, and poor handling can lead to leaks. Always limit permissions and use services that offer strong encryption.

Finally, we must consider the ethical implications of job displacement. AI is capable of automating many repetitive or routine tasks, which can impact the workforce. The ethical response to this involves a commitment to re-training and re-skilling. For individuals, the best strategy is to search for skills that are complementary to AI, such as strategic thinking, creativity, and supervisory skills.

Tips for Using AI Responsibly

Whether you are using AI for personal reasons, as an employee, or as an entrepreneur, you can make a difference by using it for good. First, never be afraid to ask questions. Don’t depend on an answer given by an AI without questioning the thinking behind the recommendation. Second, always check for transparency and ensure the solution is open about its methods.

Third, protect your data by being careful with privacy policies and opting for data anonymization whenever possible. Fourth, if you notice an unfair or erroneous outcome, report it. Most intelligence platforms are actually receptive to reporting about bias. Lastly, stay informed. The world of AI moves fast, and keeping up with the latest developments is the best way to remain proactive and alert.

The Future of Ethical AI

In the next five years, responsible or ethical AI will likely move from being an optional "nice-to-have" to an absolute obligation. Governments and international institutions are already establishing guidelines to ensure that AI remains trustworthy. We are seeing major movements like the EU AI Act, which proposes strict regulations for high-risk AI applications, and the OECD AI Principles, which provide international guidelines for fairness, accountability, and security.

Many major companies, including Google and Microsoft, have even established their own Corporate AI Ethics Boards. Moving forward, users should look for applications that offer more accountability, regular audits, and high levels of transparency. Responsible AI is not just a topic for developers or policymakers; it is important for everyone who interacts with these systems daily.

By understanding the key principles of fairness, accountability, transparency, privacy, and safety, we can ensure that AI brings a positive difference to our lives. The next five years will be a crucial period for making ethical AI the norm, finding the perfect blend between rapid innovation and enduring human values. Staying informed and proactive is the only way to ensure that AI remains a tool for humans, rather than the other way around.